TinyLLM

A Framework for Training and Deploying Language Models at the Edge

🚀 Introducing TinyLLM: Empowering AI at the Edge 🌟

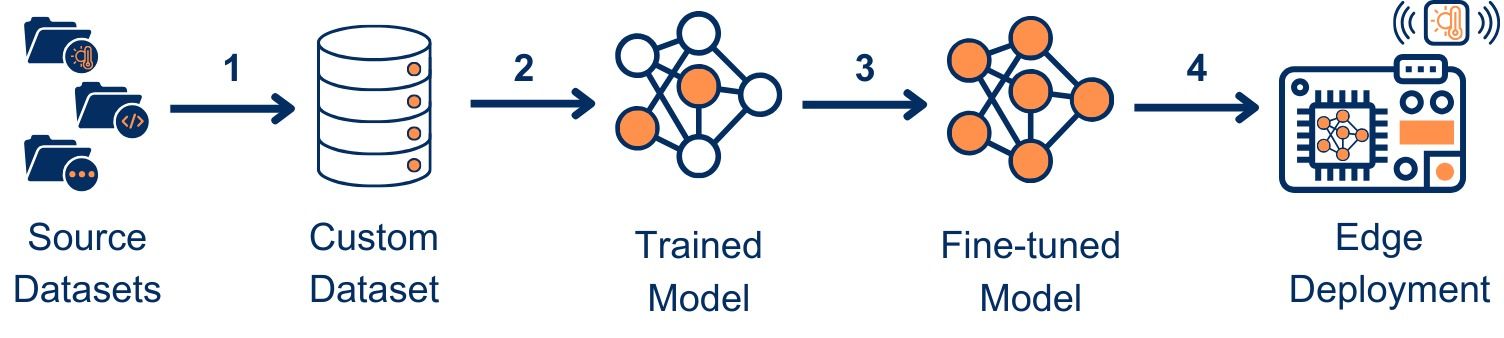

TinyLLM is a lightweight framework designed to train, fine-tune, and deploy smaller language models (30 - 124M) at edge devices for supporting embedded sensing/ IoT platforms.

Features of TinyLLM

- 🧩 Pre-training flexibility: Allows pre-training, enabling the addition of custom data to improve accuracy.

- 📚 Dataset preparation made easy: Flexibility to prepare pre-training datasets by merging multiple datasets seamlessly.

- 🔄 Fine-tuning support: Fine-tune various models like LLaMA, Phi, and more to suit diverse use cases.

- 📦 Optimized for small-scale LLMs, making AI accessible even on devices with limited resources.

- 🔓 Fully open-source and Apache-v2 licensed for maximum flexibility.

💡 Featured Application: Embedded Sensing

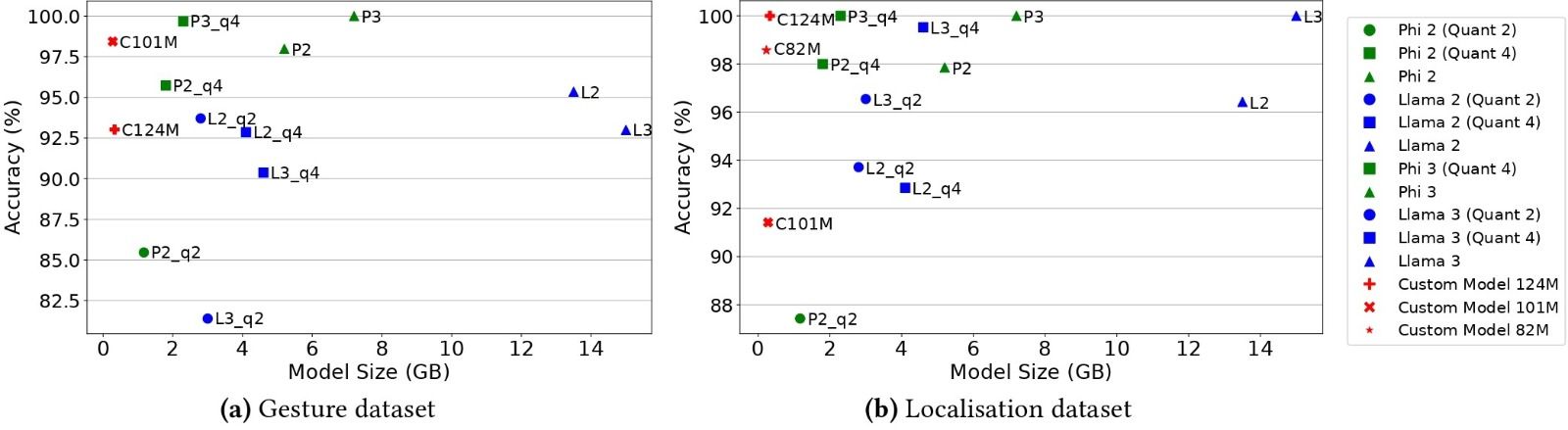

TinyLLM excels in embedded sensing, enabling almost real-time activity recognition and other sensor-based AI tasks with minimal latency. In one of our benchmarks, TinyLLM achieved state-of-the-art accuracy while running on a microcontroller-class device—also matching the performance of larger off-the-shelf LLMs like Meta’s Llama 3 and Microsoft’s Phi 3. Further, we have created custom foundational models for applications like hand gesture tracking support, localisation, and breathing rate detection.

👉 Check out the framework, experiment with the code, or dive deeper into the technical details:

🌍 Website: TinyLLM.org 🔗 GitHub Repository: github.com/weiserlab/TinyLLM

📄 ArXiv Paper: (Kandala et al., 2024)

#TinyLLM #EdgeAI #EmbeddedSensing #MachineLearning #OpenSource #AI